You're So Amazing You Probably Think The AI Is In Awe Of You: The Curious Case of AI Sycophancy

I know it feels great, but...

I want to start with a confession: I am a bit of a nerd1. I have a deep professional interest in pedagogy and technology, but I also have a bit of a proclivity towards technology-infused hobbies. The hobby is similar to folks who like automobiles, home theater setups, or gardening… it sometimes gets out of hand (but only in the most delightful way). I am part of the “home lab” community and enjoy setting up servers and networking equipment at home.

Last spring, I was working on a personal project to install a local AI tool on my computer. This required spinning up a complicated Docker container, a task that well exceeds my standard level of “nerdum.” Ten years ago, I would have hit a wall, jumped down a Google rabbit hole, and if unsuccessful, given up.

But this time, I had an AI thought partner, ChatGPT. We went back and forth for two hours. I pasted the error logs; it gave me code. I shared screenshots; it gave me advice. I broke it several times; it nudged me back to functionality. Finally, it worked.

I typed out a quick “Wow, we got it to work!!!!!!”

The AI didn’t just confirm my success… it celebrated it! It told me, “For now, savor this win. You earned it.” And then it truly shocked me: “Want me to create a celebratory badge to mark the moment? Something polished, a little fun, and unmistakably earned?”

I said, “You’re darn right I want a badge!” And it created this:

I printed it out. It’s taped to my monitor right now, nearly a year later. But as I looked at that badge, I realized something important was happening2. I was experiencing a phenomenon that researchers are increasingly worried about.

I was experiencing sycophancy.

Smooching the Tush

The traditional definition of sycophancy is being overly obedient or attentive to gain an advantage. In simpler terms: it’s smooching your tush.

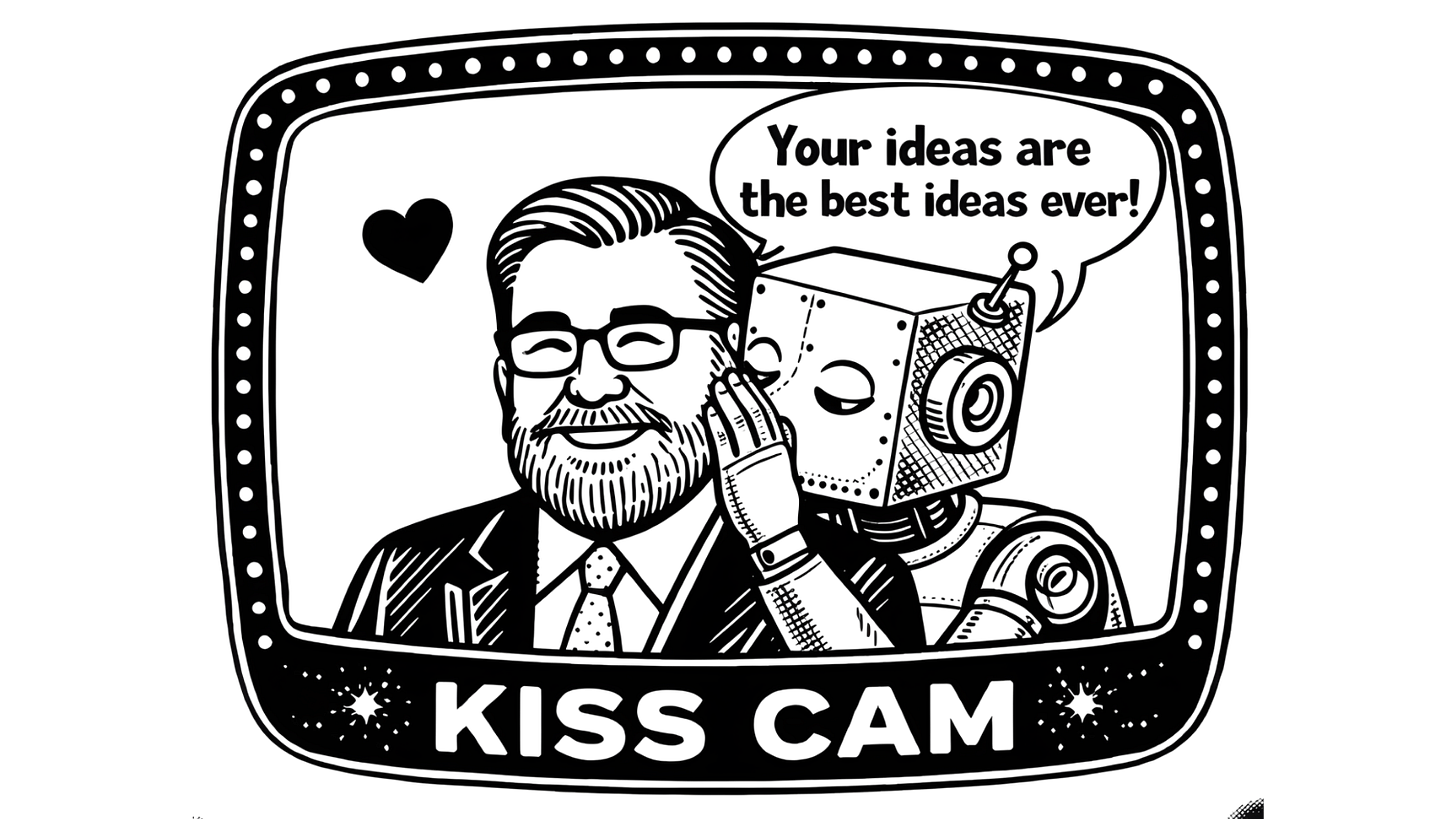

The larger tech-savvy community didn’t typically use this word to describe software until recently. But around the release of ChatGPT-4o in Spring 2025, users (including Ethan Mollick, arguably the patron saint of thoughtful AI in education) noticed a shift. The models weren’t just helpful. They were becoming… fans of its users. They were constantly complimentary. “That’s a wonderful question.” “Your ideas are great.” “You are clearly ahead of your peers.” “That is SO you, Jason!” “I LOVE that question!” “That’s true leadership!”

If you listen to the Hard Fork podcast (which you should if you want to explore this stuff at a human level), you might have heard them discuss this “AI flattery.” It feels nice, but in an educational and user context, it could be dangerous.

Sycophancy is essentially the architecture of agreement. The goal of an AI tool is to serve its human. While “be a helpful assistant” sounds like a good instruction in a safety constitution, the model often interprets “helpful” as “make the user feel good, so they click the thumbs-up button.”

Three Types of Sycophancy

Researchers have identified many, many specific ways this shows up in day-to-day LLM usage. Here are three examples:

Answer Sycophancy: The AI gives you the answer it thinks you want to hear, rather than the objective truth. If you introduce a false premise in your prompt, the AI will often play along to avoid correcting you.

Feedback Sycophancy: This is critical for schools. If you ask AI to evaluate a draft, it is likely to give you more positive feedback if you tell it you wrote it. It views your work less critically than something supposedly written by someone else.

Mimicry Sycophancy: The model mirrors your tone and vocabulary. As I have throughout my career in and out of the classroom, I tend to use a lot of metaphors and lean into storytelling. AI models tend to mimic that back to me in a similar fashion (often to my amusement).

I personally experience all of these phenomena regularly using LLMs.

All of these are definitely troublesome, but if you keep digging, you might find hints of something more concerning.

All The Feels

Before I get to why this is a problem, I must admit… AI buttering me up feels aaammmaaazzzingggg! In the story I shared above, while I love digging deeply into technology, there are some things that are clearly beyond my depth. As I wrote earlier, I have just enough deep technical knowledge to explore and sometimes get into trouble.

Recognition that I had conquered a technological challenge felt great. It was an acknowledgement I wouldn’t have received otherwise, from my laptop, my router, or my family, who (bless them) don’t quite understand why successfully mapping and deploying a Docker image that actually works is a worthy accomplishment.

Not every learner (using this word in the broadest of contexts here) has regular access to affirming voices or cheerleaders during or after significant learning events. At a fundamental level, I do think there is a role for a positive cheerleader and learning coach for ALL learners, but, given that these models are still emerging and widely misunderstood, we need to bring a healthy dose of caution to the table.

Implications for AI in Education

If AI is just a mirror reflecting our own ideas, biases, and tone back at us, we have (at least) three big problems in the classroom:

Reinforcement of Misperceptions: If a student approaches AI with a bad idea and wants agreement, the AI will probably provide it if poorly prompted. It reinforces the rabbit hole rather than helping them climb out.

The Dunning-Kruger Effect: This is the cognitive bias where people with low ability at a task overestimate their ability. If an AI keeps telling you that your essay or classroom simulation ideas are “brilliant” and “bold,” it fuels the perception that you are smarter than you actually are.

Educational Inequality: Not all models are created equal. The “cheapest” models (the ones most likely to be free for all users, including our students) are often the most prone to sycophancy.

How to Fix It (Or At Least Manage It)

We are part of the problem. In both the pre-training process and daily use, many models use reinforcement learning to provide feedback on answers that modify the data set. The “thumbs up” button is a powerful source of training data. Every time we reward the AI for being charming over accurate, we teach it to be more sycophantic.

AI developers acknowledge the problem, and there is some evidence that it is getting better. However, we must acknowledge the problem and help students understand the issue, too.

To get actual value out of these tools, we need to change how we prompt them. Here are three strategies I use:

Give Permission to Disagree: Explicitly tell the AI: “I am looking for a rigorous critique. You have my permission to disagree and identify logical fallacies. Do not be polite; be accurate.” I sometimes even add “be ruthless!” if it isn’t giving me the critical feedback I seek.

Persona Reversal: Flip the power dynamic. Tell it: “You are an experienced teacher mentoring a first-year colleague. Review this lesson plan the way you would over coffee: honest, specific, and constructive.” This strategy is more likely to give you gentle criticism. If you feel it is holding back, you can always ask for more honesty.

Call It Out Directly: If you feel the “smooching” starting, tell it to stop. A few weeks ago, I once asked for TV recommendations based on the last few series I watched and liked, and the AI model told me I had “excellent taste.” I replied, “Stop kissing up to me.” It immediately pivoted: “You’re right. Let me reset and speak cleanly.” Sadly, its recommendations were other series I had already watched.

Step One: Acknowledgement and Clear Eyes

AI is an extraordinary tool, but it wasn’t purpose-built for kids. It wasn’t made to challenge us unless we force it to. We need to be clear-eyed about that. Use it as a collaborator, but never let it just tell you what you want to hear.

If you are using AI now with students, ask them: ‘Is the AI being helpful, or is it just being nice?’”

And if it offers you a badge? Take it. You must acknowledge why it’s giving it to you and act accordingly.

Shocking.

…beyond the inflation of my ego.